Knowledge Technology at Humanoids'24

10 December 2024

Our group members Hassan Ali, Josua Spisak and Kun Chu presented their papers at the Humanoids'24 conference in Nancy, France. Here is more information about the papers:

Paper 1

Authors: Hassan Ali, Philipp Allgeuer, Carlo Mazzola, Giulia Belgiovine, Burak Can Kaplan, Lukáš Gajdošech, Stefan Wermter

Abstract: Large Language Models (LLMs) have been recently used in robot applications for grounding LLM common-sense reasoning with the robot's perception and physical abilities. In humanoid robots, memory also plays a critical role in fostering real-world embodiment and facilitating long-term interactive capabilities, especially in multi-task setups where the robot must remember previous task states, environment states, and executed actions. In this paper, we address incorporating memory processes with LLMs for generating cross-task robot actions, while the robot effectively switches between tasks. Our proposed dual-layered architecture features two LLMs, utilizing their complementary skills of reasoning and following instructions, combined with a memory model inspired by human cognition. Our results show a significant improvement in performance over a baseline of five robotic tasks, demonstrating the potential of integrating memory with LLMs for combining the robot's action and perception for adaptive task execution.

Paper 2

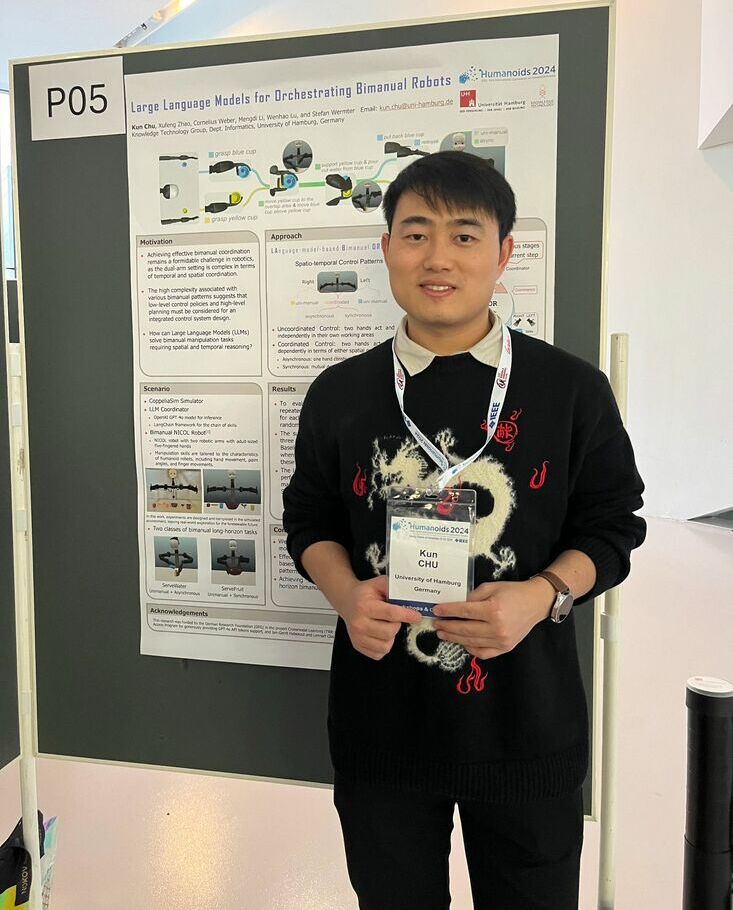

Title: Large Language Models for Orchestrating Bimanual Robots

Authors: Kun Chu, Xufeng Zhao, Cornelius Weber, Mengdi Li, Wenhao Lu, Stefan Wermter

Abstract: Although there has been rapid progress in endowing robots with the ability to solve complex manipulation tasks, generating control policies for bimanual robots to solve tasks involving two hands is still challenging because of the difficulties in effective temporal and spatial coordination. With emergent abilities in terms of step-by-step reasoning and in-context learning, Large Language Models (LLMs) have taken control of a variety of robotic tasks. However, the nature of language communication via a single sequence of discrete symbols makes LLM-based coordination in continuous space a particular challenge for bimanual tasks. To tackle this challenge for the first time by an LLM, we present LAnguage-model-based Bimanual ORchestration (LABOR), an agent utilizing an LLM to analyze task configurations and devise coordination control policies for addressing long-horizon bimanual tasks. In the simulated environment, the LABOR agent is evaluated through several everyday tasks on the NICOL humanoid robot. Reported success rates indicate that overall coordination efficiency is close to optimal performance, while the analysis of failure causes, classified into spatial and temporal coordination and skill selection, shows that these vary over tasks. The project website can be found at https://labor-agent.github.io/

Paper 3

Title: Diffusing in Someone Else's Shoes: Robotic Perspective Taking with Diffusion

Authors: Josua Spisak, Matthias Kerzel, Stefan Wermter

Abstract: Humanoid robots can benefit from their similarity to the human shape by learning from humans. When humans teach other humans how to perform actions, they often demonstrate the actions and the learning human can try to imitate the demonstration. Being able to mentally transfer from a demonstration seen from a third-person perspective to how it should look from a first-person perspective is fundamental for this ability in humans. As this is a challenging task, it is often simplified for robots by creating a demonstration in the first-person perspective. Creating these demonstrations requires more effort but allows for an easier imitation. We introduce a novel diffusion model aimed at enabling the robot to directly learn from the third-person demonstrations. Our model is capable of learning and generating the first-person perspective from the third-person perspective by translating the size and rotations of objects and the environment between two perspectives. This allows us to utilise the benefits of easy-to-produce third-person demonstrations and easy-to-imitate first-person demonstrations. The model can either represent the first-person perspective in an RGB image or calculate the joint values. Our approach significantly outperforms other image-to-image models in this task.