Learning Conversational Action Repair for Intelligent Robots (LeCAREbot)

What are the principal mechanisms required to capture the robustness and interactivity of human communication, given the situational, noisy and often ambiguous nature of natural language? And how, and to what extent, can we integrate these mechanisms within an embodied functional model that is computationally and empirically verifiable on a physical robot?

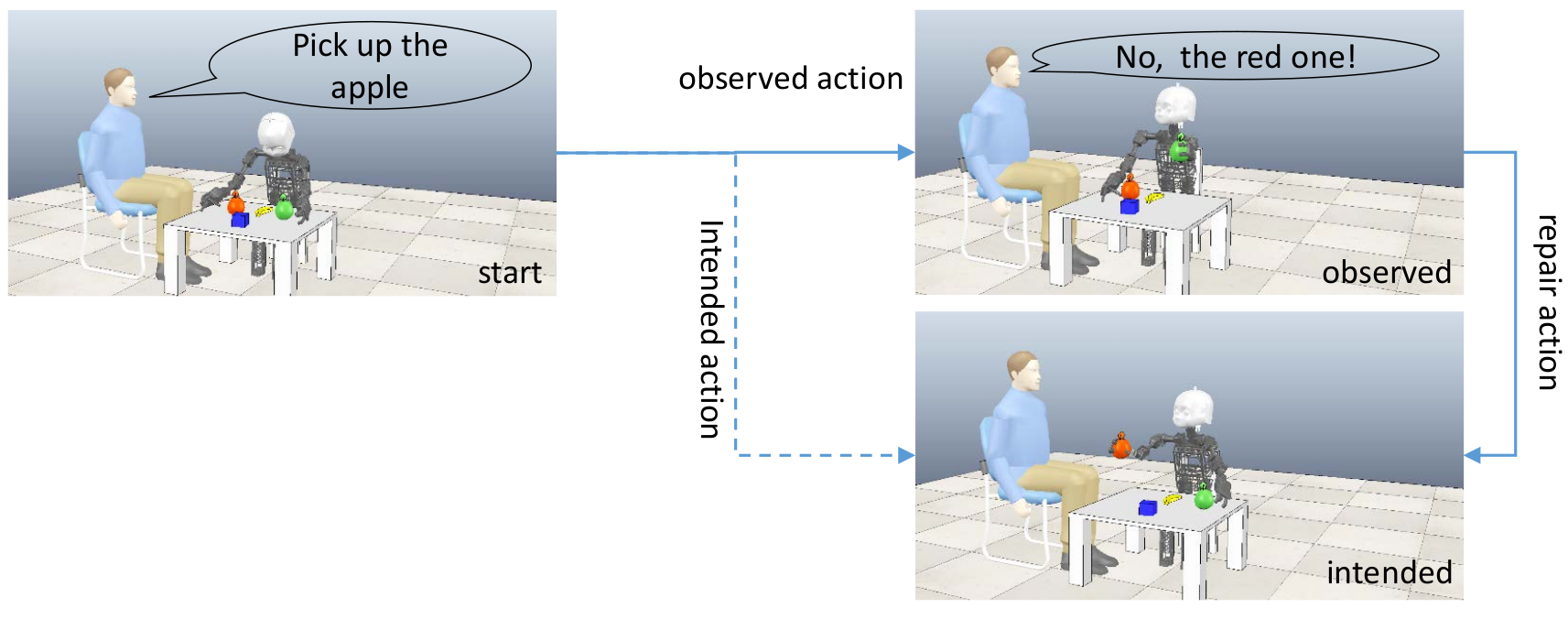

We address these research questions by investigating the linguistic phenomenon of conversational repair (CR) – a method to edit and to re-interpret previously uttered sentences that were not correctly understood by the hearer. As an example, consider the following scenario:

A human operator issues the underspecified command “Pick up the apple”. The operator intends to let the robot pick up the red apple, but the robot assumes that the human refers to the green apple (possibly because it is closer to the robot). As it picks up the green apple, the observed course of actions deviates from the user’s intended course of actions. Shortly after the human recognizes this error, he utters a repair command that lets the robot pick up the red apple.

Our own and other existing computational models for human-robot dialog consider conversational repair in the case of non-understandings, but they do not consider misunderstandings. In the LeCAREbot project, we will investigate how misunderstandings can be addressed in human-robot dialog. Herein, we develop compositional state representations for mixed verbal-physical interaction states, and we will develop a hybrid neuro-symbolic approach to learn such state abstractions. We will combine the state abstraction with model-based hierarchical reinforcement learning to realize robotic scenarios where conversational repair plays a critical role.

PIs: Dr. Manfred Eppe, Prof. Dr. Stefan Wermter