LOEWE Schwerpunkt "Digital Humanities"

Text as a product - Using machine learning for linguistic analysis

Motivation

Structural Linguistics and recent machine learning methods share the notion of text generation from mental representations. While linguistics views the genesis of a text as the encoding of the system of meaning into a text (cf. e.g. Halliday and Hasan, 1976, p. 4), topic models constitute a family of probabilistic generative machine learning approaches that model the generation of documents from a distribution of topics. This project is trying to combine both worlds. At this, we examine whether besides the structural analogy, there are also analogies between parts of the system of meaning and machine-learned topics.

Goals

For our analysis, we annotate a text corpus with lexical cohesion relations and automatically acquire topics. Then, we use the topics to predict lexical cohesion, at this using topic membership of lexical items and significance scores between lexical items to inform an automatic system for lexical chain annotation. Besides aiming at a state-of-the art system for lexical chain identification, we analyse the semiotic interpretability of stochastic methods.

Methods

This project examines the correspondence of linguistic concepts and automatically extracted topic models. Specifically, we utilize LDA topics (Blei & Lafferty, 2009) to model lexical cohesion. For this, we annotate text with the cohesion relation and use topics and lexical co-occurrence statistics as features to assess the cohesion of a text and to compute lexical chains.

Unlike syntactically inspired projects, we focus on semantic aspects of texts. Further, we use topic representations to quantify the experiential function of documents.

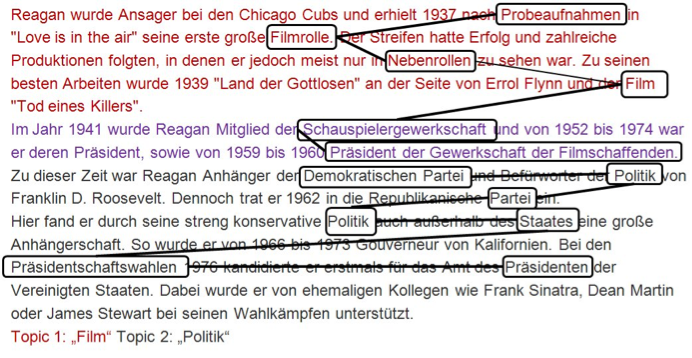

Figure: sample lexical chains

References

Cohesion in English

M.A.K. Halliday and R. Hasan

In: English Language Series, Longman, London, 1976

Topic Models

D. Blei and J. Lafferty

In: A. Srivastava and M. Sahami, editors, Text Mining: Theory and Applications. Taylor and Francis, 2009. PDF

People

- Chris Biemann, Principal Investigator

- Iryna Gurevych, Principal Investigator

- Martin Riedl, Doctoral Researcher

Related pages

- Lexical Chains for German: Data and software for lexical chain annotation and automatic assignment

- Umbrella project page "Digital Humanities"

Funding

The LOEWE Research Center "Digital Humanities" was funded from 2011 - 2014 by the Hessian excellence program "Landes-Offensive zur Entwicklung Wissenschaftlich-ökonomischer Exzellenz" (LOEWE).

Publications

- Bär, D., Biemann, C., Gurevych, I., Zesch, T. (2012): UKP: Computing Semantic Textual Similarity by Combining Multiple Content Similarity Measures (Best Participating System) In: Proceedings of the 6th Int'l Workshop on Semantic Evaluation, in conjunction with the 1st Joint Conf. on Lexical and Computational Semantics, June 2012, Montreal, Canada

- Biemann, C., Riedl, M. (2013): Text: Now in 2D! A Framework for Lexical Expansion with Contextual Similarity. Journal of Language Modelling 1(1):55--95

- Miller, T., Biemann, C., Zesch, T., Gurevych, I. (2012): Using Distributional Similarity for Lexical Expansion in Knowledge‐based Word Sense Disambiguation. Proceedings of COLING‐12, Mumbai, India

- Rauscher, J., Swiezinski, L., Riedl, M., Biemann, C. (2013): Exploring Cities in Crime: Significant Concordance and Co-occurrence in Quantitative Literary Analysis. Proceedings of the Computational Linguistics for Literature Workshop at NAACL-HLT 2013, Atlanta, GA, USA

- Remus, S., and Biemann, C. (2013): Three Knowledge-Free Methods for Automatic Lexical Chain Extraction. Proceedings of NAACL-2013, Atlanta, GA, USA

- Riedl M., Biemann C. (2012): How Text Segmentation Algorithms Gain from Topic Models, Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2012), Montreal, Canada.

- Riedl M., Biemann C. (2012): Sweeping through the Topic Space: Bad luck? Roll again! In Proceedings of the Joint Workshop on Unsupervised and Semi-Supervised Learning in NLP held in conjunction with EACL 2012, Avignon, France

- Riedl M., Biemann C. (2012): TopicTiling: A Text Segmentation Algorithm based on LDA, Proceedings of the Student Research Workshop of the 50th Meeting of the Association for Computational Linguistics, Jeju, Republic of Korea.

- Riedl, M., Biemann, C. (2012): Text Segmentation with Topic Models. Journal for Language Technology and Computational Linguistics (JLCL), Vol. 27, No. 1, pp. 47--70, August 2012

- Szarvas, G., Biemann, C., and Gurevych, G. (2013): Supervised All-Words Lexical Substitution using Delexicalized Features. Proceedings of NAACL-2013, Atlanta, GA, USA